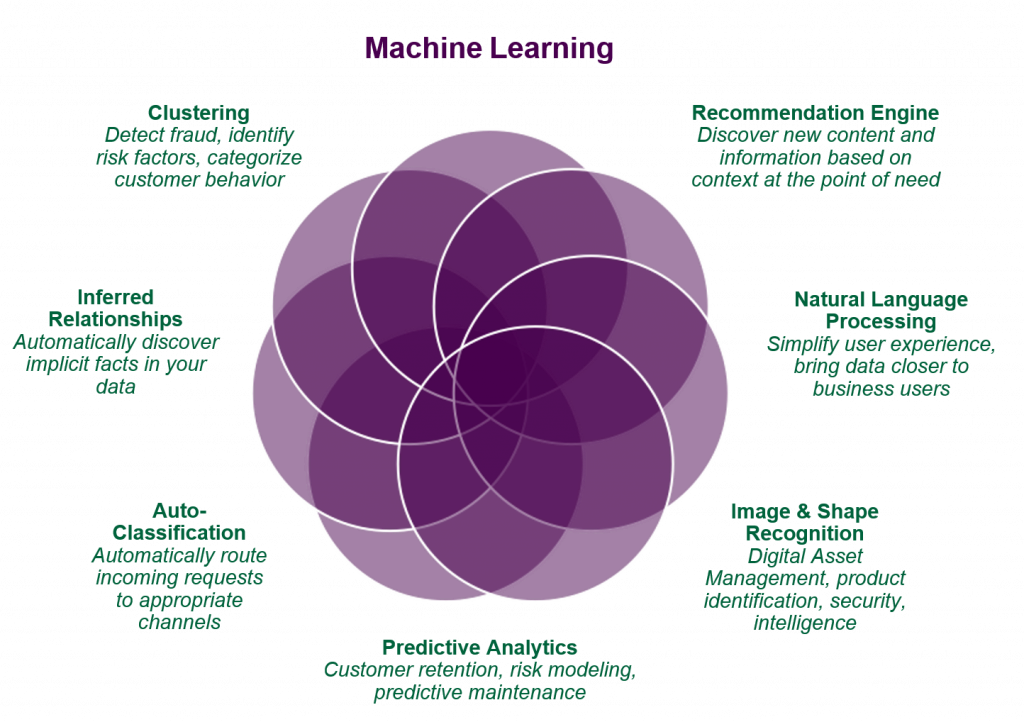

It has been nearly a decade since we started our foray into machine learning, NLP and AI. As early adopters and practitioners, we struggled through the beginning of it all with sub-optimal tools and processes, and have been incredibly lucky to benefit from the community effect of open source software.

Thank you Apache, Google, OpenAI and Facebook, your efforts are very much appreciated.

Unfortunately most organizations, especially the large ones, do not get to take risky bets as early adopters and are often risk neutral or risk averse with their projects. It’s difficult to go out on a limb when there will be shareholder scrutiny on your misses.

Luckily, most large organizations’ shareholders and Board of Directors have all heard of and become increasingly comfortable with some version of:

- business intelligence

- analytics

- machine learning

- artificial intelligence

- natural language processing

and realize that there is a benefit, both real and reputation that comes with having deep insight into your process, data and operations. And of course, being able to talk about it a bunch.

Let’s dive in, shall we.

The Low-Hanging Fruit

Supervised Learning for Marketing and Sales

While there are multiple learning styles, i.e. the approaches to training algorithms using data, the most common style is called supervised learning. We’ll start on this branch of data science and explain why it is considered low-hanging fruit for businesses that plan to embark on the ML initiative, additionally describing the most common use cases.

Supervised machine learning suggests that the expected answer to a problem is unknown for upcoming data, but is already identified in a historic dataset. In other words, historic data contains correct answers, and the task of the algorithm is to find them in the new data.

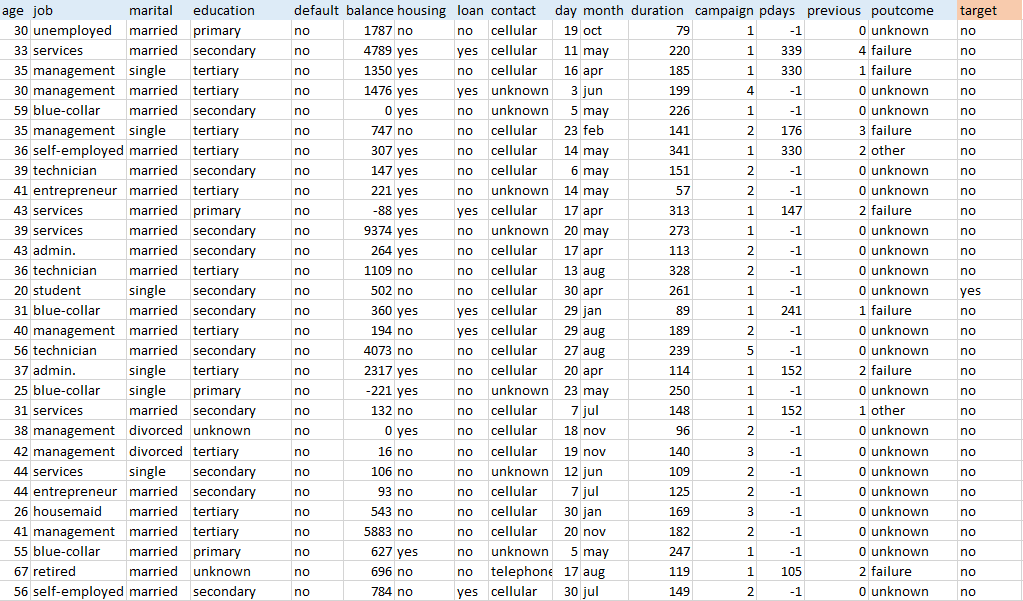

As an example, let’s have a look at a public dataset gathered during a 2012 marketing campaign. The institution aimed at encouraging its customers to subscribe to terms deposits by calling them and pitching the service.

Usually, datasets are in tables having data items (e.g. customers) organized in rows with variables (e.g. age, job, education, balance) in columns. Labeled data sets also have target variables (labels), the values to be predicted in future data. In this dataset, the target variable defines whether customers have subscribed for terms deposit after a call or not.

What to do with Sales and Marketing Data?

Training an ML algorithm means feeding this data into a machine using one of the mathematical methods. The process allows for building a model able to define the target variable in future data. In this case, the task of an algorithm would be to classify data items into two categories (yes/no). Generally, supervised learning operates with three main tasks:

Binary classification. The case of binary classification is described above. The algorithm classifies data into two categories.

Multiclass classification. This requires the algorithm to choose between more than two types of answers for a target variable.

Regression. Regression models predict continuous values, while classification models consider categorical ones. For instance, predicting net profit as a measurement of the customer lifetime value is a standard regression problem.

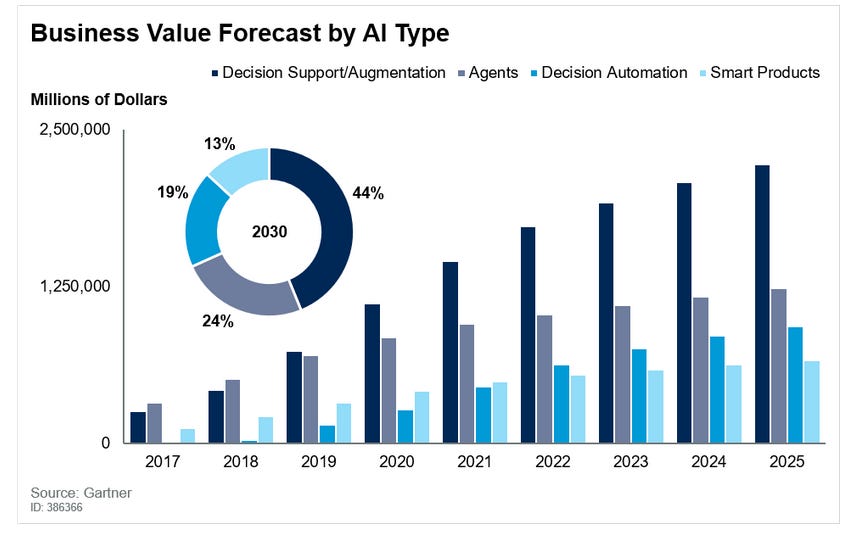

Is there something easier? The case for buying AI

Conversational AI, Agents, Chatbots and Self-Service

Chatbots have taken a quantum leap forward in user support, contributing substantially to the emergence of the modern service desk. Even in their earliest form, they heralded the promise of versatile new advances to come, such as sentiment tracking, NLP and machine learning.

As chatbots evolve, we are seeing a continuum of progress that will soon make it nearly impossible to tell the difference between human and artificial intelligence in service desk and customer service functions. I believe it’s enlightening to understand the chatbot journey, as it has evolved from the first generation to next-gen conversational AI that is unsupervised and context-aware.

Driven by the pandemic and Work from Home/Anywhere

In the beginning, remote work put heavy pressures on organizations: Wait times expanded from a few hours to days and weeks, call center costs soared and social distancing and changing expectations added their own challenges. Buying more service desk and customer support licenses was not the answer to these problems.

Creating a more agile approach called for out-of-the-box, instantly usable AI. That’s why there are now virtual agents and virtual assistants that enable enriched user engagement; concierge solutions and new platforms can understand and do the job autonomously.

The outcome of the chatbot evolution is to dramatically diminish or even eliminate the need for historical data, experts and data scientists. The new technology requires no AI training, no complex manuals or professional services and no prep work such as data cleansing. Deploying AI chatbots need not take weeks and months; the solution can actually be found online within hours and immediately start to deliver automated, continuous value.

Chatbots have now arrived in the new AI era. As it comes of age, next-generation AI has evolved to be not a black box but a convenient, transparent, turnkey solution.

What about Finance? Legal? Operations?

Enter NLP, Intelligence Document Processing (IDP) and Document AI.

Document AI

The industry has evolved from OCR to solutions that use multiple AI technologies to address the bottlenecks. These solutions are categorized by:

- The old-school approach: OCR

- The modern approach: Various names, including:

- Intelligent Data Processing

- Intelligent Data Capture

- Machine Learning OCR

- Cognitive Capture

- AI OCR

- AI RPA

Elsewhere you will read how AI technology is being applied to solve unstructured data problems. Be cautious here; AI has become a buzzword some vendors deploy to cloud the waters when it comes to describing how AI plays in their solutions.

For now, the key point is this:

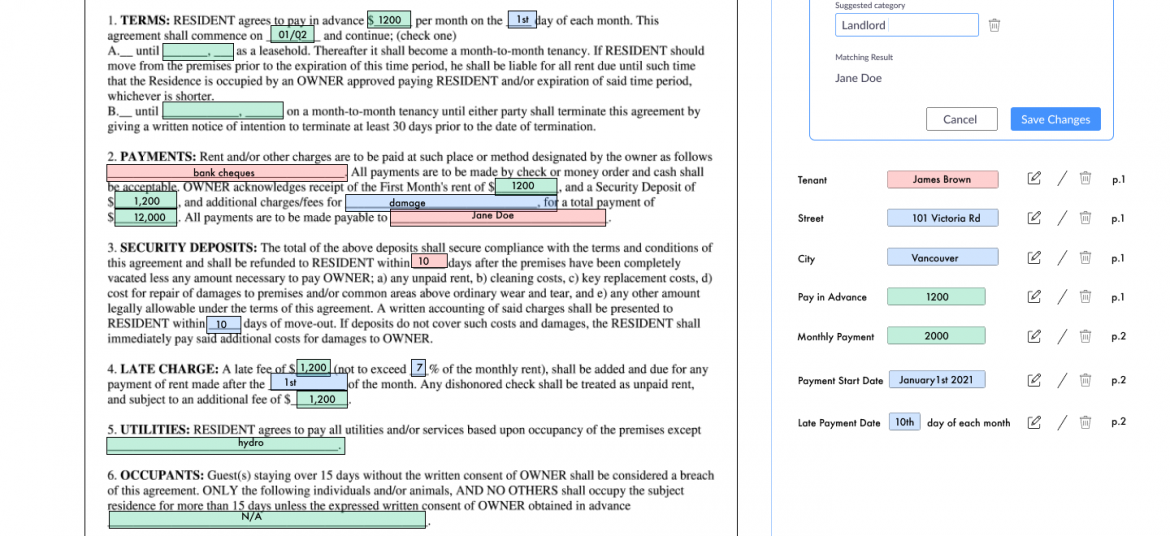

Intelligent Document Processing (IDP) can extract virtually all the information, understand the data, and create additional value from complex documents.

Three common problems Apogee’s Document AI can solve for you right now

Data Extraction From Annual Reports

Data Extraction From Technical Drawings

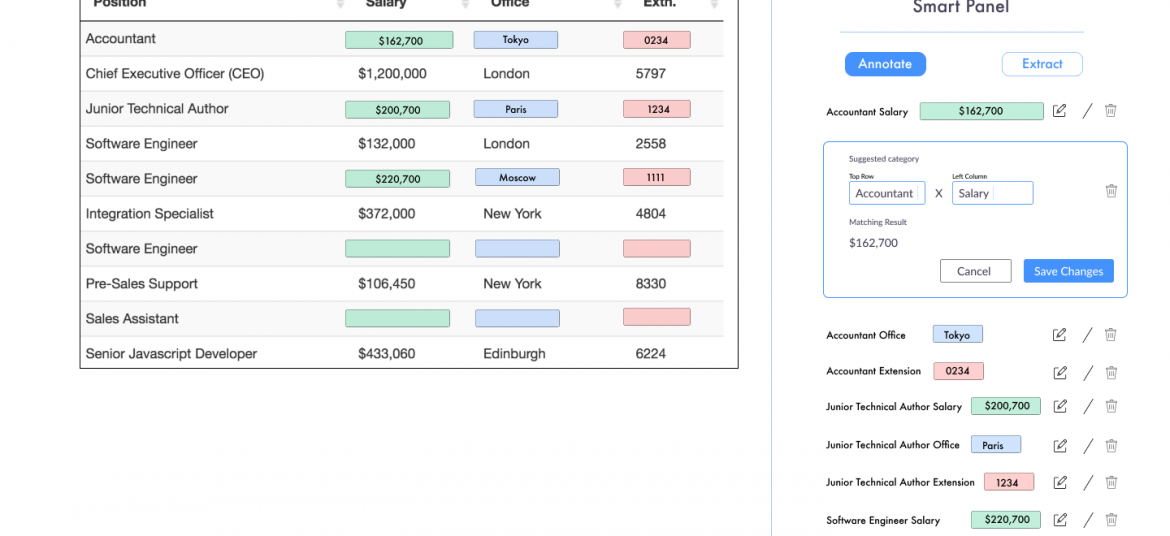

Data Extraction From Tables

Apogee Suite of NLP and AI tools made by 1000ml has helped Small and Medium Businesses in several industries, large Enterprises and Government Ministries gain an understanding of the Intelligence that exists within their documents, contracts, and generally, any content.

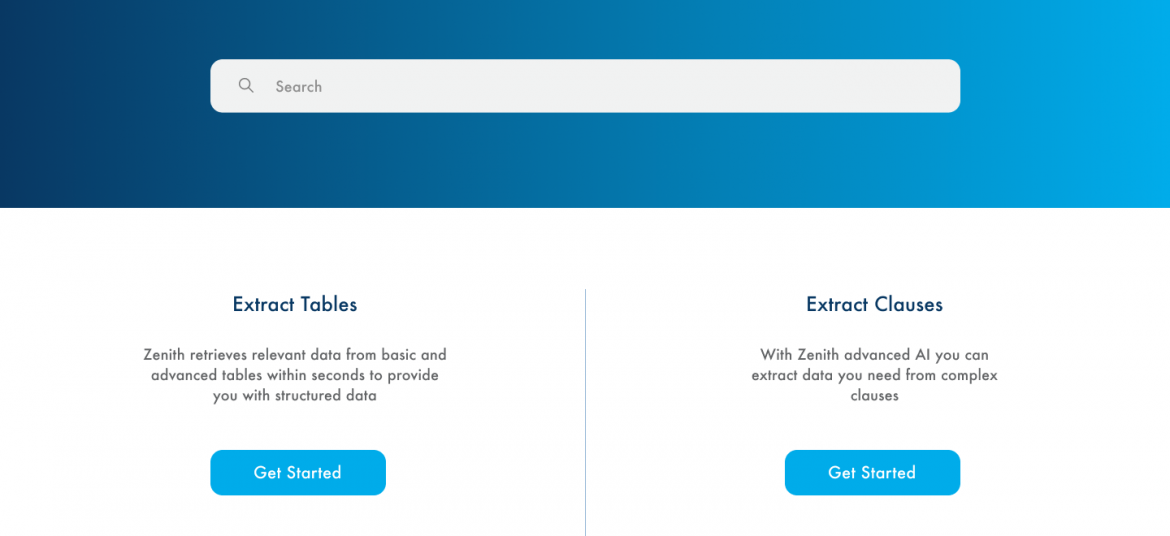

Our toolset – Apogee, Zenith and Mensa work together to allow for:

- Any document, contract and/or content ingested and understood

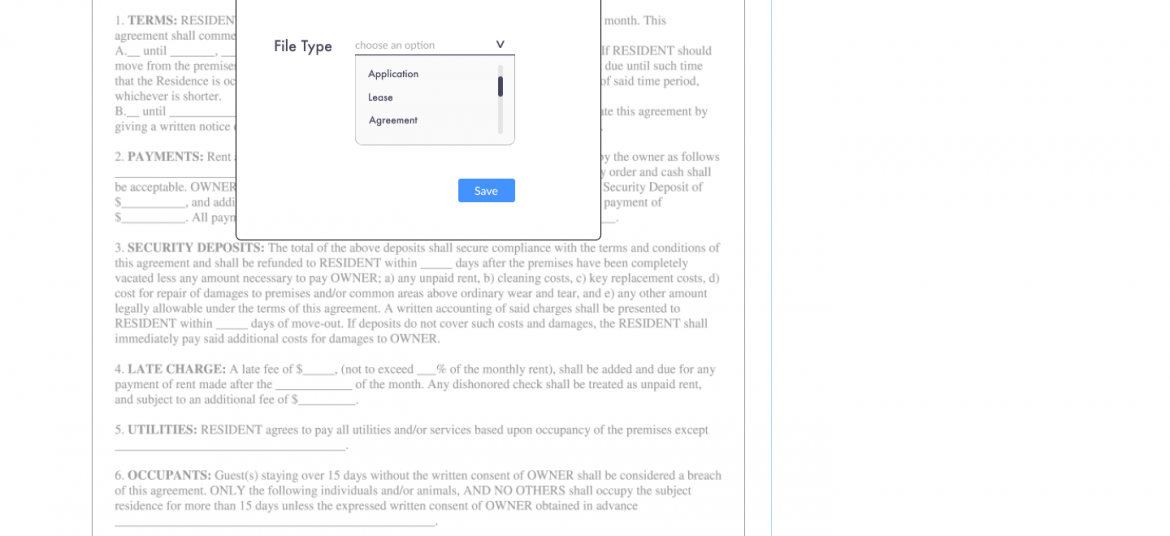

- Document (Type) Classification

- Content Summarization

- Metadata (or text) Extraction

- Table (and embedded text) Extraction

- Conversational AI (chatbot)

Search, Javascript SDK and API

- Document Intelligence

- Intelligent Document Processing

- ERP NLP Data Augmentation

- Judicial Case Prediction Engine

- Digital Navigation AI

- No-configuration FAQ Bots

- and many more

Check out our next webinar dates below to find out how 1000ml’s tool works with your organization’s systems to create opportunities for Robotic Process Automation (RPA) and automatic, self-learning data pipelines.